Auto-build links at different distant layers from Money Site still effective in the year 2018?

Let’s find out!

- What Is PageRank: The Origins And Its Development.

- Google Has Ignored PageRank Algorithm?

- Watch Out: PageRank Is Udpating A New Invention (If You Already Know About PageRank, Please Skip The Below And Jump To Here)

- PageRank Development Outlook

What Is PageRank: The Origins And Its Development.

In the past, PageRank is considered a value regarding to number of links directed to a particular website. Although PageRank is researched and invented by Lary Page, developed by Sergrey Brin in a project regarding to the New Search Engine Invention back in 2001, whenever anyone mentions about PageRank, we automatically relate its to Google PageRank rather than the 2001 origin invention.

From a research I read in 2007, the algorithm for PageRank in 2001 had been widely applied from the year 2003 to 2006: It measures the number of links that directing to a particular page, calculate (+) points and (-) points based on dofollow and nofollow attribute.

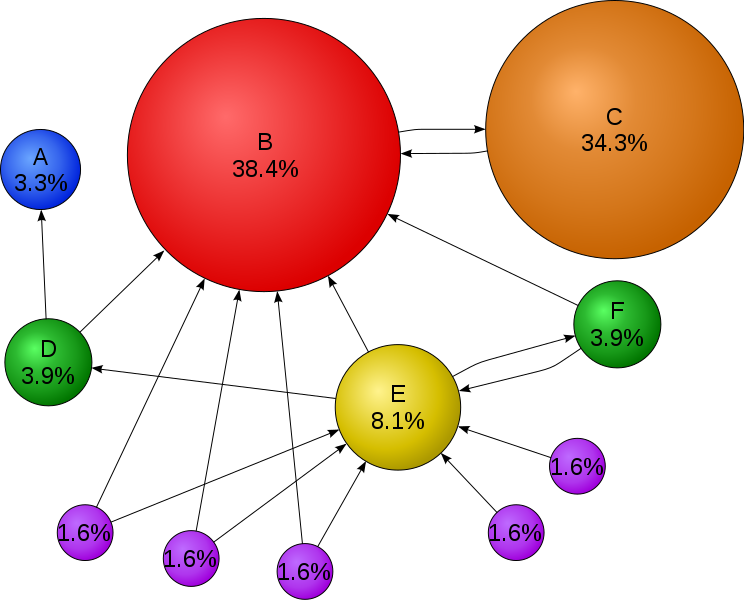

Though website C has less linkages than website E, the volume of PR level has been done on page E might be much less than website C.

Around the year 2007 to 2008, a few updates have been discussed on many SEO forums at that tim e. Google PageRank wasn’t simply measured by the number of links but also by the values that are categorized to: reciprocal links, forum links, blog links, web category links,etc.

It might sounds a bit more complex, in face it was still pretty basic and poorly done. Up to 2009, Google PageRank started to detect which forum links is a new topic links or a response link, backlinks from free 2.0 blog to pumper sites, the tremendous number of links would still can trick PageRank to a certain extent.

From 2009 to 2014, especially in 2012, with the famous algorithm such as Panda and Penguin, Google PageRank (abbrevitation as GGPR) has significantly improved. Apart from categorize links, GGPR could check quite accurately on the links quality.

Up to date, all the new updated algorithm has a certain affect to not only GGPR but also SERP generally. Other criteria such as website interface, IP address, Front End structure, webmaster email, etc. and much more are considered to be checked and evaluated by GGPR, though Google has never admitted and will never do on those matters.

Google Has Ignored PageRank Algorithm?

Here is a video which Google discuss on: “PageRank is no longer a criteria to evaluate SERP”

“We will probably not going to be updating it PageRank, going forward, at least in the Toolbar PageRank”. Okay, there are 2 :

Watch Out: PageRank Is Udpating A New Invention.

In the recent time, on Bill Slawski website (seobythesea.com), he shared an invention that has been certified with the info as below:

Inventors: Hajaj; Nissan (Emerald Hills, CA)

Applicant: Google LLC

Assignee: Google LLC (Mountain View, CA)

Family ID: 1000001478409

Appl. No.: 14/886,990

The quick brief on this invention:

“One Embodiment Of The Present Invention Provides A System That Produces A Ranking For Web Pages. During Operation, The System Receives A Set Of Pages To Be Ranked, Wherein The Set Of Pages Are Interconnected With Links. The System Also Receives A Set Of Seed Pages Which Include Outgoing Links To The Set Of Pages. The System Then Assigns Lengths To The Links Based On Properties Of The Links And Properties Of The Pages Attached To The Links.

The System Next Computes Shortest Distances From The Set Of Seed Pages To Each Page In The Set Of Pages Based On The Lengths Of The Links Between The Pages. Next, The System Determines A Ranking Score For Each Page In The Set Of Pages Based On The Computed Shortest Distances. The System Then Produces A Ranking For The Set Of Pages Based On The Ranking Scores For The Set Of Pages.”

“One Possible Variation Of PageRank That Would Reduce The Effect Of These Techniques Is To Select A Few “Trusted” Pages (Also Referred To As The Seed Pages) And Discovers Other Pages Which Are Likely To Be Good By Following The Links From The Trusted Pages. For Example, The Technique Can Use A Set Of High Quality Seed Pages (S.Sub.1, S.Sub.2, . . . , S.Sub.N), And For Each Seed Page I=1, 2, . . . , N, The System Can Iteratively Compute The PageRank Scores For The Set Of The Web Pages P Using The Formulae:

.A-Inverted..Noteq..Di-Elect Cons..Function..Times..Fwdarw..Times..Function..Times..Function..Fwdarw. ##EQU00002## Where R.Sub.I(S.Sub.I)=1, And W(Q.Fwdarw.P) Is An Optional Weight Given To The Link Q.Fwdarw.P Based On Its Properties (With The Default Weight Of 1).

Generally, It Is Desirable To Use A Large Number Of Seed Pages To Accommodate The Different Languages And A Wide Range Of Fields Which Are Contained In The Fast Growing Web Contents. Unfortunately, This Variation Of PageRank Requires Solving The Entire System For Each Seed Separately. Hence, As The Number Of Seed Pages Increases, The Complexity Of Computation Increases Linearly, Thereby Limiting The Number Of Seeds That Can Be Practically Used.

Hence, What Is Needed Is A Method And An Apparatus For Producing A Ranking For Pages On The Web Using A Large Number Of Diversified Seed Pages Without The Problems Of The Above-Described Techniques.”

Sourced From: Producing A Ranking For Pages Using Distances In A Web-Link Graph

In short: Google will pick out a group of trusted page and manually rank them (I called them Google Trust Page, in its original post, they are called Trust Page). From there, it follows those links to measure the length of different layers of links and give them points accordingly. Finally, PageRank will gather those data as a partial evaluation that would gradually resonate with PageRank later on and surely SERP.

Interesting? More details can be found in the original post at the links above in case you need more in-depth info on it.

PageRank Development Outlook

According to this new invention, the closer your links building are done surrounding the trusted page’s first layer, the better ranking you might have. Writing blogs, cross blogging on privilege page would earn you a big point in your SEO scale. Who knows, later on, those pages that belong to this first layer would become the Trust Page list? From time to time, when the list of Trust Page has increased significantly, this will not be a partial ratio to be evaluated, but become an official PageRank algorithm.

What should we do?

We would never know what Google would update those algorithm in the future. We can predict what they might be interested in by putting us in their shoes.

For sure, the search results have to meet what customers are looking for. If the results come back a total fail, the users would just exit out and find the search engine unreliable.

If you love your good night sleeps and wouldn’t like to come back and fix all your tricks worried that the new algorithm would come out and fined your website anytime any day? White hat SEO practice would be your best pal. That is also my principle. Doing SEO without acknowledging Black Hat practices, meaning you have only experienced half of the SEO world, to figure out why, refer to my blog: 2018: Black or White hat SEO in Vietnam?